dtypes if (i = 'string' ) & (i != 'y' ) ] num_var = for i in df. # one hot encoding and assembling encoding_var = for i in df. An estimator (either decision tree / random forest / gradient boosted trees) is also required as an input. In this case, I wanted the function to select either the top n features or based on a certain cut-off so these parameters are included as arguments to the function. The cross-validation function in the previous post provides a thorough walk-through on creating the estimator object and params needed. We begin by coding up the estimator object. The first is the estimator which returns a model and the second is the model/transformer which returns a dataframe. The important thing to remember is that the pipeline object has two components. Pipeline: A Pipeline chains multiple Transformers and Estimators together to specify an ML workflow. E.g., a learning algorithm is an Estimator which trains on a DataFrame and produces a model. E.g., an ML model is a Transformer which transforms a DataFrame with features into a DataFrame with predictions.Įstimator: An Estimator is an algorithm which can be fit on a DataFrame to produce a Transformer. Transformer: A Transformer is an algorithm which can transform one DataFrame into another DataFrame. E.g., a DataFrame could have different columns storing text, feature vectors, true labels, and predictions.

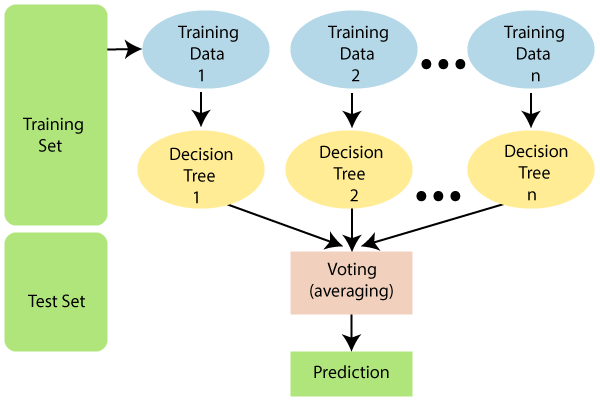

Now let us learn to build a new pipeline object that makes the above task easy!įirst a bit of theory as taken from the ML pipeline documentation:ĭataFrame: This ML API uses DataFrame from Spark SQL as an ML dataset, which can hold a variety of data types. transform (df3 ) Building the estimator function

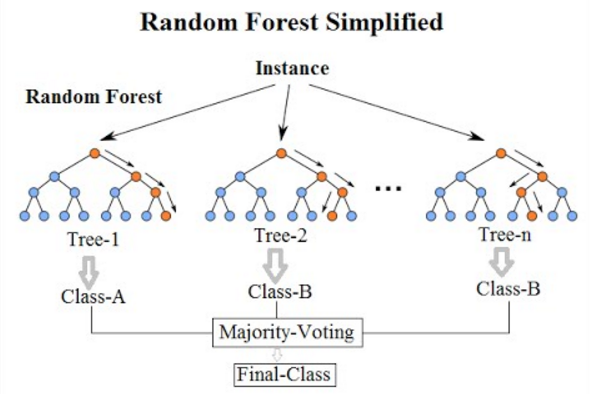

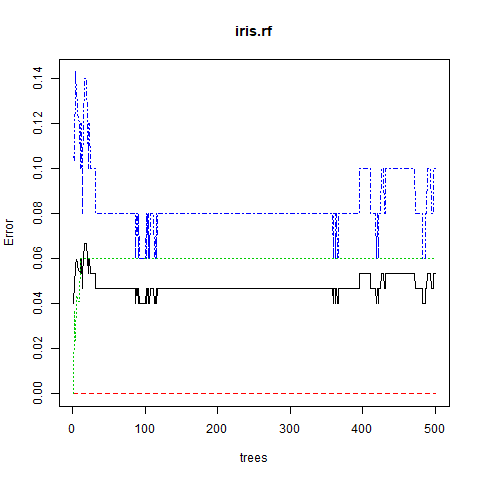

drop ( 'rawPrediction', 'probability', 'prediction' ) rf2 = RandomForestClassifier (labelCol = "label", featuresCol = "features2", seed = 8464, numTrees = 10, cacheNodeIds = True, subsamplingRate = 0.7 ) mod2 = rf2. Here I just run most of these tasks as part of a pipeline.ĭf3 = df3. Data Preprocessingīefore we run the model on the most relevant features, we would first need to encode the string variables as binary vectors and run a random forest model on the whole feature set to get the feature importance score. Let us take a look at how to do feature selection using the feature importance score the manual way before coding it as an estimator to fit into a Pyspark pipeline. The number of categories for each string type is relatively small which makes creating binary indicator variables / one-hot encoding a suitable pre-processing step. Let us take a look at what is represented by each variable that is of string type.įor i in df. In machine learning speak it might also lead to the model being overfitted. Converting strings to a binary indicator variable / dummy variable takes up quite a few degrees of freedom. There are quite a few variables that are encoded as a string in this dataset. It's always nice to take a look at the distribution of the variables I use a local version of spark to illustrate how this works but one can easily use a yarn cluster instead. Here, I use the feature importance score as estimated from a model (decision tree / random forest / gradient boosted trees) to extract the variables that are plausibly the most important.įirst, let's setup the jupyter notebook and import the relevant functions. I find Pyspark's MLlib native feature selection functions relatively limited so this is also part of an effort to extend the feature selection methods. A pipeline is a fantastic concept of abstraction since it allows the analyst to focus on the main tasks that needs to be carried out and allows the entire piece of work to be reusable.Īs a fun and useful example, I will show how feature selection using feature importance score can be coded into a pipeline. Recently, I have been looking at integrating existing code in the pyspark ML pipeline framework. This is an extension of my previous post where I discussed how to create a custom cross validation function. In this post I discuss how to create a new pyspark estimator to integrate in an existing machine learning pipeline.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed